File:Suomen koronavirustapaukset ja kuolemat paivittain syksy 2020 1.svg

Original file (SVG file, nominally 961 × 365 pixels, file size: 84 KB)

Captions

Captions

Summary

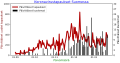

[edit]| DescriptionSuomen koronavirustapaukset ja kuolemat paivittain syksy 2020 1.svg |

English: Suomen koronavirustapaukset ja kuolemat päivittäin syksyllä 2020 |

| Date | |

| Source | Own work |

| Author | Merikanto |

Sources of data: THL, Helsingin Sanomat, Janne Solanpää, ECDC, 28.1.2021

Wikipedian koronaviruspandemian aikajana

https://fi.wikipedia.org/wiki/Suomen_koronaviruspandemian_aikajana

Internetin COVID-19 data aggregaatti

https://datahub.io/core/covid-19/r/countries-aggregated.csv

Janne Solanpään ennustesivu

josta

https://covid19.solanpaa.fi/data/fin_cases.json

Myös THL avoin data:

paivittaiset_tapaukset="https://sampo.thl.fi/pivot/prod/fi/epirapo/covid19case/fact_epirapo_covid19case.json?row=measure-444833&column=dateweek20200101-508804L"

paivittaiset_kuolemat="https://sampo.thl.fi/pivot/prod/fi/epirapo/covid19case/fact_epirapo_covid19case.json?row=measure-492118&column=dateweek20200101-508804L"

Python code to produce graph

- COVID-19 statistics from aggregated data from net site

- with Python

- Input from internet site: cases, recovered, deaths.

- Calculates active cases.

- version 0000.0009

- 16.2.2020

-

- parametrit

paiva1="2020-09-01"

paiva2="2021-02-12"

ymax1=800

ymax2=30

import math as math

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import matplotlib.ticker as ticker

import locale

from datetime import datetime, timedelta

import matplotlib.dates as mdates

from dateutil import rrule, parser

from scipy import interpolate

import scipy.signal

from matplotlib.ticker import (MultipleLocator, FormatStrFormatter,

AutoMinorLocator, MaxNLocator)

from scipy.signal import savgol_filter

from bs4 import BeautifulSoup

import requests

import json

locale.setlocale(locale.LC_ALL, 'fi_FI')

def format_func(value, tick_number):

N = int(np.round(value/10))

if N == 0:

return "0"

else:

return r"${0}\pv$".format(N)

- very basic exponential r0 calculation

def calculate_r0(time1, time2, val1, val2):

k=0

td=time2-time1

##

#optim

#td=1

gr0=math.log(val2/val1)

gr=gr0/td

if(gr!=0):

td= math.log(2.0)/gr

else:

return(1)

tau=5.0

k=math.log(2.0)/td

r0=math.exp(k*tau)

if(r0==32):

r0=1

if(r0>32):

r0=4

return(r0)

def cut_by_dates(dfx, start_date, end_date):

mask = (dfx['Date'] >= start_date) & (dfx['Date'] <= end_date)

dfx2 = dfx.loc[mask]

#print(dfx2)

return(dfx2)

def load_country_cases(maa):

dfin = pd.read_csv('https://datahub.io/core/covid-19/r/countries-aggregated.csv', parse_dates=['Date'])

countries = [maa]

dfin = dfin[dfin['Country'].isin(countries)]

#print (head(dfin))

#quit(-1)

selected_columns = dfin"Date", "Confirmed", "Recovered", "Deaths"

df2 = selected_columns.copy()

df=df2

len1=len(df["Date"])

aktiv2= [None] * len1

for n in range(0,len1-1):

aktiv2[n]=0

dates=df['Date']

rekov1=df['Recovered']

konf1=df['Confirmed']

death1=df['Deaths']

#print(dates)

spanni=6

#print(rekov1)

#quit(-1)

rulla = rekov1.rolling(window=spanni).mean()

rulla2 = rulla.rolling(window=spanni).mean()

tulosrulla=rulla2

tulosrulla= tulosrulla.replace(np.nan, 0)

tulosrulla=np.array(tulosrulla).astype(int)

rulla2=tulosrulla

x=np.linspace(0,len1,len1);

#print("kupla")

#print(tulosrulla)

#print(konf1)

#print(death1)

#print(aktiv2)

konf1=np.array(konf1).astype(int)

death1=np.array(death1).astype(int)

#print(konf1)

#quit(-1)

for n in range(0,(len1-1)):

#print("luzmu")

rulla2[n]=tulosrulla[n]

#print ("luzmu2")

#aktiv2[n]=konf1[n]-death1[n]-rulla2[n]

aktiv2[n]=konf1[n]

#print(rulla2[n])

#quit(-1)

#aktiv3=np.array(aktiv2).astype(int)

dailycases1= [0] * len1

dailydeaths1= [0] * len1

for n in range(1,(len1-1)):

dailycases1[n]=konf1[n]-konf1[n-1]

if (dailycases1[n]<0): dailycases1[n]=0

for n in range(1,(len1-1)):

dailydeaths1[n]=death1[n]-death1[n-1]

if (dailydeaths1[n]<0): dailydeaths1[n]=0

#quit(-1)

df.insert (2, "Daily_Cases", dailycases1)

df.insert (3, "Daily_Deaths", dailydeaths1)

df['ActiveEst']=aktiv2

#print (df)

dfout = df'Date', 'Confirmed','Deaths','Recovered', 'ActiveEst','Daily_Cases','Daily_Deaths'

#print(df)

#print(dfout)

#print(".")

return(dfout)

def load_fin_wiki_data():

url="https://fi.wikipedia.org/wiki/Suomen_koronaviruspandemian_aikajana"

response = requests.get(url)

soup = BeautifulSoup(response.text, 'lxml')

table = soup.find_all('table')[0] # Grab the first table

df = pd.read_html(str(table))[0]

#print(df)

#Päivä Tapauksia Uusia tapauksia Sairaalassa Teholla Kuolleita Uusia kuolleita Toipuneita

df2 = df'Tapauksia','Uusia tapauksia','Sairaalassa','Teholla','Kuolleita','Uusia kuolleita','Toipuneita'

kaikkiatapauksia=df['Tapauksia']

toipuneita=df['Toipuneita']

uusiatapauksia=df['Uusia tapauksia']

sairaalassa=df['Sairaalassa']

teholla=df['Teholla']

kuolleita=df['Kuolleita']

uusiakuolleita=df['Uusia kuolleita']

len1=len(kaikkiatapauksia)

kaikkiatapauksia2=[]

toipuneita2=[]

uusiatapauksia2=[]

sairaalassa2=[]

teholla2=[]

kuolleita2=[]

uusiakuolleita2=[]

for n in range(0,len1):

elem0=kaikkiatapauksia[n]

elem1 = .join(c for c in elem0 if c.isdigit())

elem2=int(elem1)

kaikkiatapauksia2.append(elem2)

elem0=toipuneita[n]

elem1 = .join(c for c in elem0 if c.isdigit())

toipuneita2.append(int(elem1))

elem0=uusiatapauksia[n]

elem1 = .join(c for c in elem0 if c.isdigit())

uusiatapauksia2.append(int(elem1))

elem0=sairaalassa[n]

#elem1 = .join(c for c in elem0 if c.isdigit())

sairaalassa2.append(int(elem0))

elem0=teholla[n]

#elem1 = .join(c for c in elem0 if c.isdigit())

teholla2.append(int(elem0))

elem0=kuolleita[n]

#elem1 = .join(c for c in elem0 if c.isdigit())

kuolleita2.append(int(elem0))

elem0=uusiakuolleita[n]

#elem1 = .join(c for c in elem0 if c.isdigit())

uusiakuolleita2.append(int(elem0))

#kaikkiatapauksia3=np.array(kaikkiatapauksia2).astype(int)

#print("---")

#print(kaikkiatapauksia2)

#print(toipuneita2)

kaikkiatapauksia3=np.array(kaikkiatapauksia2).astype(int)

toipuneita3=np.array(toipuneita2).astype(int)

uusiatapauksia3=np.array(uusiatapauksia2).astype(int)

sairaalassa3=np.array(sairaalassa2).astype(int)

teholla3=np.array(teholla2).astype(int)

kuolleita3=np.array(kuolleita2) .astype(int)

uusiakuolleita3=np.array(uusiakuolleita2).astype(int)

napapaiva1 = np.datetime64("2020-04-01")

timedelta1= np.timedelta64(len(kaikkiatapauksia3),'D')

napapaiva2 = napapaiva1+timedelta1

#dada1 = np.linspace(napapaiva1.astype('f8'), napapaiva2.astype('f8'), dtype='<M8[D]')

dada1 = pd.date_range(napapaiva1, napapaiva2, periods=len(kaikkiatapauksia3)).to_pydatetime()

#print(dada1)

data = {'Date':dada1,

'Kaikkia tapauksia':kaikkiatapauksia3,

"Uusia tapauksia":uusiatapauksia3,

"Sairaalassa":sairaalassa3,

"Teholla":teholla3,

"Kuolleita":kuolleita3,

"Uusiakuolleita":uusiakuolleita3,

"Toipuneita":toipuneita3

}

df2 = pd.DataFrame(data)

#print(kaikkiatapauksia3)

#print ("Fin wiki data.")

return(df2)

def plottaa_tapaukset_kuolemat(paivat, tapaukset, kuolemat):

#left, right = plt.xlim()

fig, ax1 = plt.subplots(constrained_layout=True)

ax1.tick_params(axis='both', which='major', labelsize=15)

ax1.set_xlabel('Päivämäärä', color='g',size=18)

ax1.set_ylabel('Päivittäiset uudet tapaukset', color='#7f0000',size=18)

ax1.set_title('Koronavirustapaukset Suomessa', color='b',size=22)

ax1.plot(paivat, tapaukset, linewidth=6.5, color='#af0000', label="Päivittäiset tapaukset")

ax2 = ax1.twinx()

ax1.set_ylim(0,ymax1)

ax2.set_ylim(0,ymax2)

ax2.set_ylabel('Päivittäiset kuolemat', color='black',size=18)

ax2.tick_params(axis='both', which='major', labelsize=15)

ax2.bar(paivat,kuolemat, linewidth=2, color='black',label="Päivittäiset kuolemat")

lines1, labels1 = ax1.get_legend_handles_labels()

lines2, labels2 = ax2.get_legend_handles_labels()

ax2.legend(lines1 + lines2, labels1 + labels2, loc='upper left', fontsize=16)

locator1 = mdates.MonthLocator()

dateformat1 = mdates.DateFormatter('%d.%m')

ax1.xaxis.set_major_formatter(dateformat1)

ax1.xaxis.set_major_locator(locator1)

ax2.yaxis.set_major_locator(MaxNLocator(integer=True))

plt.show()

plt.savefig('kuva.svg')

return(0)

def get_solanpaa_fi_data():

url="https://covid19.solanpaa.fi/data/fin_cases.json"

response = requests.get(url,allow_redirects=True)

open('solanpaa_fi.json', 'w').write(response.text)

with open('solanpaa_fi.json') as f:

sola1=pd.read_json(f)

#sola1_top = sola1.head()

#print (sola1_top)

#Rt […]

#Rt_lower […]

#Rt_upper […]

#Rt_lower50 […]

#Rt_upper50 […]

#Rt_lower90 […]

#Rt_upper90 […]

#new_cases_uks […]

#new_cases_uks_lower50 […]

#new_cases_uks_upper50 […]

#new_cases_uks_lower90 […]

#new_cases_uks_upper90 […]

#new_cases_uks_lower […]

#new_cases_uks_upper […]

dada1=sola1["date"]

casa1=sola1["cases"]

death1=sola1["deaths"]

newcasa1=sola1["new_cases"]

newdeath1=sola1["new_deaths"]

hosp1=sola1["hospitalized"]

icu1=sola1["in_icu"]

rt=sola1["Rt"]

newcasauks=sola1["new_cases_uks"]

data = {'Date':dada1,

'Tapauksia':casa1,

'Kuolemia':death1,

'Sairaalassa':hosp1,

'Teholla':icu1,

'Uusia_tapauksia':newcasa1,

'Uusia_kuolemia':newdeath1,

'R':rt,

'Uusia_tapauksia_ennuste':newcasauks,

}

df = pd.DataFrame(data)

return(df)

def get_ecdc_fi_hospital_data():

url="https://opendata.ecdc.europa.eu/covid19/hospitalicuadmissionrates/json/"

response = requests.get(url,allow_redirects=True)

open('ecdc_hoic.json', 'w').write(response.text)

with open('ecdc_hoic.json') as f:

sola1=pd.read_json(f)

#print(sola1.head())

sola2=sola1.loc[sola1["country"]=='Finland']

#sola2.to_csv (r'ecdc_hospital_finland_origo.csv', index = True, header=True, sep=';')

#print(sola2.head())

dada0=sola2["date"]

hosp0=sola2["value"]

country0=sola2["country"]

len1=len(dada0)

len2=int(len1/2)

#print (len2)

dada1=dada0[1:len2-1]

hosp1=np.array(hosp0[1:len2-1])

icu1=np.array(hosp0[len2:len1])

#print(dada1)

print (icu1)

quit(-1)

data = {'Date':dada1,

'Sairaalassa':hosp1,

'Teholla':icu1

}

df = pd.DataFrame(data)

df.to_csv (r'ecdc_hospital_finland.csv', index = True, header=True, sep=';')

return df

def get_thl_fi_open_data():

## thl open data, 1.2.2021

headers = {'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_5) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/50.0.2661.102 Safari/537.36'}

response1 = requests.get(url1,headers=headers,allow_redirects=True)

open('thl_cases1.json', 'w').write(response1.text)

with open('thl_cases1.json') as json_file1:

data1 = json.load(json_file1)

#print(data1)

headers = {'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_5) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/50.0.2661.102 Safari/537.36'}

response2 = requests.get(url2,headers=headers,allow_redirects=True)

open('thl_deaths1.json', 'w').write(response2.text)

with open('thl_deaths1.json') as json_file2:

data2 = json.load(json_file2)

#print(data1)

k2=data1['dataset']

k3=k2['dimension']

k4=k3['dateweek20200101']

k5=k4['category']

k6=k5['label']

k8a=k6.keys()

k8b=k6.values()

d1=k2['value']

m2=data2['dataset']

m3=m2['dimension']

m4=m3['dateweek20200101']

m5=m4['category']

m6=m5['label']

m8a=m6.keys()

m8b=m6.values()

d2=m2['value']

#print (d1)

d1a=d1.keys()

d1b=d1.values()

d2a=d2.keys()

d2b=d2.values()

#print (k8b)

#print (d1a)

#print (d1b)

#print (d2a)

#print (d2b)

len1=len(k8b)

#print(len1)

#dates0=np.datetime64(np.array(list(k8b)))

dates0=list(k8b)

casekeys=np.array(list(d1a)).astype(int)

cases0=np.array(list(d1b)).astype(int)

deathkeys=np.array(list(d2a)).astype(int)

deaths0=np.array(list(d2b)).astype(int)

#print(dates0)

#print(casekeys)

#print(cases0)

kasetab1=np.empty(len1).astype(int)

kasetab1[casekeys]=cases0

deathtab1=np.empty(len1).astype(int)

deathtab1[deathkeys]=deaths0

#print (len(dates0))

#print (len(kasetab1))

datax = {'Date':dates0,

'Uusia_tapauksia':kasetab1,

'Uusia_kuolemia':deathtab1

}

df = pd.DataFrame(datax)

return(df)

def cut_country_data_by_current(dfx, start_date):

mask = (dfx['Date'] >= start_date)

dfx2 = dfx.loc[mask]

dfx2.drop(df.tail(1).index,inplace=True)

#print(dfx2)

return(dfx2)

- main proge

- df=load_country_cases("Finland")

- df.to_csv (r'kovadata1.csv', index = True, header=True, sep=';')

- df=load_fin_wiki_data()

- print(df)

- quit(-1)

- df=get_thl_fi_open_data()

df=get_solanpaa_fi_data()

df.to_csv (r'kovadata0.csv', index = True, header=True, sep=';')

df2=cut_by_dates(df, paiva1,paiva2)

- df2=cut_country_data_by_current(df, paiva1)

print(df2)

- quit(-1)

df2.to_csv (r'kovadata2.csv', index = True, header=True, sep=';')

dates0=df2['Date']

- cases0=df2['Daily_Cases']

dailycases1=df2['Uusia_tapauksia']

dailydeaths1=df2['Uusia_kuolemia']

- dailycases1=df2['Daily_Cases']

- dailydeaths1=df2['Daily_Deaths']

date1 = paiva1

date2 = paiva2

datesx = list(rrule.rrule(rrule.DAILY, dtstart=parser.parse(date1), until=parser.parse(date2)))

- dates_a=dates0

dates_a=datesx

dailycases_savgol_1 = scipy.signal.savgol_filter(dailycases1,7, 1)

pos1=len(dailycases_savgol_1)-2

time2=pos1-0

time1=pos1-21

val1=dailycases_savgol_1[time1]

val2=dailycases_savgol_1[time2]

ro00=calculate_r0(time1, time2, val1, val2)

ro=round(ro00,2)

print("R0 = ",ro)

plottaa_tapaukset_kuolemat(dates_a, dailycases1, dailydeaths1)

print(".")

Licensing

[edit]- You are free:

- to share – to copy, distribute and transmit the work

- to remix – to adapt the work

- Under the following conditions:

- attribution – You must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use.

- share alike – If you remix, transform, or build upon the material, you must distribute your contributions under the same or compatible license as the original.

File history

Click on a date/time to view the file as it appeared at that time.

| Date/Time | Thumbnail | Dimensions | User | Comment | |

|---|---|---|---|---|---|

| current | 08:22, 12 March 2021 | 961 × 365 (84 KB) | Merikanto (talk | contribs) | Update | |

| 13:10, 26 February 2021 |  | 1,040 × 431 (82 KB) | Merikanto (talk | contribs) | Update | |

| 13:31, 16 February 2021 |  | 881 × 379 (77 KB) | Merikanto (talk | contribs) | Upload | |

| 13:27, 16 February 2021 |  | 576 × 432 (1 KB) | Merikanto (talk | contribs) | Update attempt | |

| 08:16, 27 January 2021 |  | 994 × 515 (73 KB) | Merikanto (talk | contribs) | Upload | |

| 14:07, 10 January 2021 |  | 830 × 403 (70 KB) | Merikanto (talk | contribs) | Update of layout | |

| 13:15, 10 January 2021 |  | 930 × 424 (70 KB) | Merikanto (talk | contribs) | Update | |

| 11:26, 31 December 2020 |  | 854 × 469 (112 KB) | Merikanto (talk | contribs) | Update of graph | |

| 12:08, 16 December 2020 |  | 935 × 407 (108 KB) | Merikanto (talk | contribs) | Update of graph | |

| 12:18, 4 December 2020 |  | 950 × 451 (106 KB) | Merikanto (talk | contribs) | Upload |

You cannot overwrite this file.

File usage on Commons

There are no pages that use this file.

Metadata

This file contains additional information such as Exif metadata which may have been added by the digital camera, scanner, or software program used to create or digitize it. If the file has been modified from its original state, some details such as the timestamp may not fully reflect those of the original file. The timestamp is only as accurate as the clock in the camera, and it may be completely wrong.

| Width | 768.96pt |

|---|---|

| Height | 292.32pt |