File:Koronavirustapaukset suomessa ennuste sarima 1.svg

From Wikimedia Commons, the free media repository

Jump to navigation

Jump to search

Size of this PNG preview of this SVG file: 800 × 400 pixels. Other resolutions: 320 × 160 pixels | 640 × 320 pixels | 1,024 × 512 pixels | 1,280 × 641 pixels | 2,560 × 1,281 pixels | 1,121 × 561 pixels.

Original file (SVG file, nominally 1,121 × 561 pixels, file size: 46 KB)

File information

Structured data

Captions

Captions

Add a one-line explanation of what this file represents

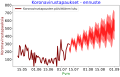

Koronavirustapaukset Suomessa - ennuste

Summary

[edit]| DescriptionKoronavirustapaukset suomessa ennuste sarima 1.svg |

Suomi: Koronavirustapaukset Suomessa - ennuste |

| Date | |

| Source | Own work |

| Author | Merikanto |

| SVG development InfoField |

| Python 3 code to produce image |

|---|

##################################################################

##

## COVID-19 Finland short-term forecast

## Python 3 script

##

## sarima

## Input from internet site: cases, recovered, deaths.

##

## version 0004.0004

## 07.08.2022

##

##################################################################

import math as math

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import matplotlib.ticker as ticker

import locale

from datetime import datetime, timedelta

import matplotlib.dates as mdates

from scipy import interpolate

import scipy.signal

from matplotlib.ticker import (MultipleLocator, FormatStrFormatter,

AutoMinorLocator, MaxNLocator)

from scipy.signal import savgol_filter

## ggplot

#from plotnine import *

##from mizani.breaks import date_breaks, minor_breaks

##from mizani.formatters import date_format

from bs4 import BeautifulSoup

import requests

import locale

from datetime import datetime, timedelta

import matplotlib.ticker as ticker

import matplotlib.dates as mdates

from matplotlib.ticker import (MultipleLocator, FormatStrFormatter,

AutoMinorLocator)

from matplotlib.ticker import MaxNLocator

from matplotlib.ticker import ScalarFormatter

from statsmodels.tsa.statespace.sarimax import SARIMAX

from statsmodels.tsa.arima.model import ARIMA

from numpy import fft

from datetime import date

##################################

#### asetukset parameters

## pohjadatan rajat: limits of base data

today = date.today()

#print("Today's date:", today)

#daybeforeyesterday = datetime.today() - timedelta(days=2)

paiva1="2022-05-01"

paiva2="2022-07-10"

paiva2a="2022-07-10"

#paiva2=daybeforeyesterday

## ennusteen rajat: forecast limits

taika1=paiva2

taika2="2022-12-01"

#paiva1="2020-10-15"

#paiva2="2020-12-23"

## ennusteen rajat: forecast limits

#taika1="2020-12-23"

#taika2="2021-01-15"

## y-akselin rajat

ymax1=10000

ymax2=40

vormat1='%Y-%m-%d'

def fourierExtrapolation(x, n_predict):

n = x.size

n_harm = 10 # number of harmonics in model

t = np.arange(0, n)

p = np.polyfit(t, x, 1) # find linear trend in x

x_notrend = x - p[0] * t # detrended x

x_freqdom = fft.fft(x_notrend) # detrended x in frequency domain

f = fft.fftfreq(n) # frequencies

indexes = range(n)

# sort indexes by frequency, lower -> higher

#indexes.sort(key = lambda i: np.absolute(f[i]))

t = np.arange(0, n + n_predict)

restored_sig = np.zeros(t.size)

for i in indexes[:1 + n_harm * 2]:

ampli = np.absolute(x_freqdom[i]) / n # amplitude

phase = np.angle(x_freqdom[i]) # phase

restored_sig += ampli * np.cos(2 * np.pi * f[i] * t + phase)

return restored_sig + p[0] * t

def format_func(value, tick_number):

N = int(np.round(value/10))

if N == 0:

return "0"

else:

return r"${0}\pv$".format(N)

## very basic exponential r0 calculation

def calculate_r0(time1, time2, val1, val2):

k=0

td=time2-time1

##

#optim

#td=1

gr0=math.log(val2/val1)

gr=gr0/td

if(gr!=0):

td= math.log(2.0)/gr

else:

return(1)

tau=5.0

k=math.log(2.0)/td

r0=math.exp(k*tau)

if(r0==32):

r0=1

if(r0>32):

r0=4

return(r0)

def cut_by_dates(dfx, start_date, end_date):

mask = (dfx['Date'] >= start_date) & (dfx['Date'] <= end_date)

dfx2 = dfx.loc[mask]

#print(dfx2)

return(dfx2)

def load_country_cases(maa):

dfin = pd.read_csv('https://datahub.io/core/covid-19/r/countries-aggregated.csv', parse_dates=['Date'])

countries = [maa]

dfin = dfin[dfin['Country'].isin(countries)]

#print (head(dfin))

#quit(-1)

selected_columns = dfin[["Date", "Confirmed", "Recovered", "Deaths"]]

df2 = selected_columns.copy()

df=df2

len1=len(df["Date"])

aktiv2= [None] * len1

for n in range(0,len1-1):

aktiv2[n]=0

dates=df['Date']

rekov1=df['Recovered']

konf1=df['Confirmed']

death1=df['Deaths']

#print(dates)

spanni=6

#print(rekov1)

#quit(-1)

rulla = rekov1.rolling(window=spanni).mean()

rulla2 = rulla.rolling(window=spanni).mean()

tulosrulla=rulla2

tulosrulla= tulosrulla.replace(np.nan, 0)

tulosrulla=np.array(tulosrulla).astype(int)

rulla2=tulosrulla

x=np.linspace(0,len1,len1);

#print("kupla")

#print(tulosrulla)

#print(konf1)

#print(death1)

#print(aktiv2)

konf1=np.array(konf1).astype(int)

death1=np.array(death1).astype(int)

#print(konf1)

#quit(-1)

for n in range(0,(len1-1)):

#print("luzmu")

rulla2[n]=tulosrulla[n]

#print ("luzmu2")

#aktiv2[n]=konf1[n]-death1[n]-rulla2[n]

aktiv2[n]=konf1[n]

#print(rulla2[n])

#quit(-1)

#aktiv3=np.array(aktiv2).astype(int)

dailycases1= [0] * len1

dailydeaths1= [0] * len1

for n in range(1,(len1-1)):

dailycases1[n]=konf1[n]-konf1[n-1]

if (dailycases1[n]<0): dailycases1[n]=0

for n in range(1,(len1-1)):

dailydeaths1[n]=death1[n]-death1[n-1]

if (dailydeaths1[n]<0): dailydeaths1[n]=0

#quit(-1)

df.insert (2, "Daily_Cases", dailycases1)

df.insert (3, "Daily_Deaths", dailydeaths1)

df['ActiveEst']=aktiv2

#print (df)

dfout = df[['Date', 'Confirmed','Deaths','Recovered', 'ActiveEst','Daily_Cases','Daily_Deaths']]

#print(df)

#print(dfout)

print(".")

return(dfout)

def get_solanpaa_fi_data():

url="https://covid19.solanpaa.fi/data/fin_cases.json"

response = requests.get(url,allow_redirects=True)

open('solanpaa_fi.json', 'w').write(response.text)

with open('solanpaa_fi.json') as f:

sola1=pd.read_json(f)

#sola1_top = sola1.head()

#print (sola1_top)

#Rt […]

#Rt_lower […]

#Rt_upper […]

#Rt_lower50 […]

#Rt_upper50 […]

#Rt_lower90 […]

#Rt_upper90 […]

#new_cases_uks […]

#new_cases_uks_lower50 […]

#new_cases_uks_upper50 […]

#new_cases_uks_lower90 […]

#new_cases_uks_upper90 […]

#new_cases_uks_lower […]

#new_cases_uks_upper […]

dada1=sola1["date"]

casa1=sola1["cases"]

death1=sola1["deaths"]

newcasa1=sola1["new_cases"]

newdeath1=sola1["new_deaths"]

hosp1=sola1["hospitalized"]

icu1=sola1["in_icu"]

rt=sola1["Rt"]

newcasauks=sola1["new_cases_uks"]

data = {'Date':dada1,

'Tapauksia':casa1,

'Kuolemia':death1,

'Sairaalassa':hosp1,

'Teholla':icu1,

'Uusia_tapauksia':newcasa1,

'Uusia_kuolemia':newdeath1,

'R':rt,

'Uusia_tapauksia_ennuste':newcasauks,

}

df = pd.DataFrame(data)

return(df)

def get_ecdc_fi_hospital_data():

url="https://opendata.ecdc.europa.eu/covid19/hospitalicuadmissionrates/json/"

response = requests.get(url,allow_redirects=True)

open('ecdc_hoic.json', 'w').write(response.text)

with open('ecdc_hoic.json') as f:

sola1=pd.read_json(f)

#print(sola1.head())

sola2=sola1.loc[sola1["country"]=='Finland']

#sola2.to_csv (r'ecdc_hospital_finland_origo.csv', index = True, header=True, sep=';')

#print(sola2.head())

dada0=sola2["date"]

hosp0=sola2["value"]

country0=sola2["country"]

len1=len(dada0)

len2=int(len1/2)

#print (len2)

dada1=dada0[1:len2-1]

hosp1=np.array(hosp0[1:len2-1])

icu1=np.array(hosp0[len2:len1])

#print(dada1)

print (icu1)

quit(-1)

data = {'Date':dada1,

'Sairaalassa':hosp1,

'Teholla':icu1

}

df = pd.DataFrame(data)

df.to_csv (r'ecdc_hospital_finland.csv', index = True, header=True, sep=';')

return df

#########################################################################

#########################################################################

##########################################################################

#df=load_country_cases('Finland')

#df=load_fin_wiki_data()

df=get_solanpaa_fi_data()

#print(df)

#quit(-1)

df2=cut_by_dates(df, paiva1,paiva2)

print(df2)

#quit(-1)

#############################

############# main proge

dates0=df2['Date']

cases0=df2['Uusia_tapauksia']

#print (cases0)

#quit()

dailycases1=np.array(cases0).astype(int)

#dates=np.array(dates0).to_pydatetime()

dates=dates0

print(dates0)

print(cases0)

print (dailycases1)

print (len(dailycases1))

#quit(-1)

len1=len(dailycases1)

dailycases_savgol_1 = scipy.signal.savgol_filter(dailycases1,7, 1)

pos1=len(dailycases_savgol_1)-2

time2=pos1-0

time1=pos1-21

val1=dailycases_savgol_1[time1]

val2=dailycases_savgol_1[time2]

ro00=calculate_r0(time1, time2, val1, val2)

ro=round(ro00,2)

print("R0")

print (ro)

yvalue=dailycases1

#rng = pd.date_range(paiva1, paiva2, freq='d')

start1 = np.datetime64(paiva1)

end1 = np.datetime64(paiva2)

start2 = np.datetime64(taika1)

end2 = np.datetime64(taika2)

#days1 = np.linspace(start1.astype('f8'), end1.astype('f8'), dtype='<M8[D]')

#napapaiva1 = np.datetime64("2020-04-01")

timedelta1= np.timedelta64(len(dailycases1),'D')

#napapaiva2 = napapaiva1+timedelta1

#dada1 = np.linspace(napapaiva1.astype('f8'), napapaiva2.astype('f8'), dtype='<M8[D]')

days1 = pd.date_range(start1, end1, periods=len(dailycases1)).to_pydatetime()

days2 = np.linspace(start2.astype('f8'), end2.astype('f8'), dtype='<M8[D]')

days3 = np.linspace(start1.astype('f8'), end2.astype('f8'), dtype='<M8[D]')

len1=len(days1)

len2=len(days2)

len3=len(days3)

#print (len(days1))

#print (len(yvalue))

#quit(-1)

xdays1 = pd.date_range(paiva1, paiva2a, freq='d')

print (xdays1)

print (yvalue)

print (len(xdays1))

print (len(yvalue))

#quit(-1)

dfs = pd.DataFrame({ 'Datetime': xdays1, 'Timestamp': xdays1, 'Count': yvalue })

#quit(-1)

xdays2 = pd.date_range(taika1, taika2, freq='d')

dfs2 = pd.DataFrame({ 'Datetime': xdays2, 'Timestamp': xdays2, 'Count': np.random.randn(len(xdays2)) })

#quit(-1)

#Aggregating the dataset at daily level

dfs.Timestamp = pd.to_datetime(dfs.Datetime,format='%Y-%m-%d')

dfs.index = dfs.Timestamp

y_hat_avg = dfs2.copy()

#quit(-1)

fit1 = SARIMAX(dfs.Count, order=(2, 1, 4), seasonal_order=(0,1,1,7)).fit()

#quit(-1)

sarimabegin1=datetime.strptime(taika1 ,vormat1)

sarimaend1=datetime.strptime(taika2 ,vormat1)

print(sarimabegin1)

print(sarimaend1)

#quit (-1)

sarima1 = fit1.predict(start=sarimabegin1, end=sarimaend1, dynamic=True)

#quit(-1)

fcast_index = pd.to_datetime(xdays2)

#print ("kk")

print (fcast_index)

#quit(-1)

pred_uc = fit1.get_forecast(steps=len(fcast_index), index=fcast_index)

#quit(-1)

pred_ic = pred_uc.conf_int()

#quit(-1)

print (pred_ic)

#print (pred_mean)

#quit(-1)

pred_upper=np.array(pred_ic['lower Count'])

pred_lower=np.array(pred_ic['upper Count'])

#quit(-1)

#pred_sarima=np.array(sarima1)

q1=(pred_upper-pred_lower)/2

q2=(pred_upper+pred_lower)/2

pu1=q2+q1/4

pl1=q2-q1/4

pu2=q2+q1/10

pl2=q2-q1/10

days22=np.array(days2)

len2=len(days22)

#quit(-1)

#fourier_forecast1 = fourierExtrapolation(np.array(yvalue), len2)

#fourier_forecast2=fourier_forecast1[:len2]

#########################

########################

fig, ax = plt.subplots(constrained_layout=True)

ax.legend(fontsize=14)

plt.xticks(fontsize=18)

plt.yticks(fontsize=18, rotation=0)

ax.set_ylim(9,ymax1)

ax.set_xlabel('Pvm', color='g',size=18)

ax.set_ylabel('Koronavirustapauksia', color='#800000',size=18)

ax.set_title('Koronavirustapaukset - ennuste', color='b',size=22)

#ax2.set_ylabel('Sairaalassa', color='#3f7f00', size=18)

ax.tick_params(axis='both', which='major', labelsize=18)

plt.plot( dfs['Count'], '#800000',linewidth=4.0,label='Koronavirustapausten päivittäinen luku')

#plt.plot(sarima1, '#7f0000',linestyle="--",linewidth=4.0,label='SARIMA-ennuste')

#plt.fill_between(xdays2,pred_upper, pred_lower, color='red', alpha=0.25, label="Ennusteen luottamusväli")

plt.fill_between(xdays2,pu1,pl1, color='red', alpha=0.50)

plt.fill_between(xdays2,pu2,pl2, color='red', alpha=0.75)

#plt.plot(xf,fourier_forecast2, '#007f00',linestyle="-",linewidth=4.0,label='Fourier-ennuste')

#ax.set_xlim(alkupvm,loppupvm)

dateformat1 = mdates.DateFormatter('%d.%m')

ax.xaxis.set_major_formatter(dateformat1)

#ax2.yaxis.set_major_locator(MaxNLocator(integer=True))

ax.legend(fontsize=14, loc="upper left")

#ax2.legend(fontsize=14, loc="center left")

#plt.legend()

plt.show()

|

Licensing

[edit]I, the copyright holder of this work, hereby publish it under the following license:

This file is licensed under the Creative Commons Attribution-Share Alike 4.0 International license.

- You are free:

- to share – to copy, distribute and transmit the work

- to remix – to adapt the work

- Under the following conditions:

- attribution – You must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use.

- share alike – If you remix, transform, or build upon the material, you must distribute your contributions under the same or compatible license as the original.

File history

Click on a date/time to view the file as it appeared at that time.

| Date/Time | Thumbnail | Dimensions | User | Comment | |

|---|---|---|---|---|---|

| current | 06:59, 7 August 2022 |  | 1,121 × 561 (46 KB) | Merikanto (talk | contribs) | update |

| 06:17, 14 April 2022 |  | 806 × 426 (40 KB) | Merikanto (talk | contribs) | Update | |

| 09:45, 8 December 2021 | 1,115 × 432 (43 KB) | Merikanto (talk | contribs) | update | ||

| 14:26, 5 August 2021 |  | 729 × 454 (40 KB) | Merikanto (talk | contribs) | Update | |

| 12:18, 13 July 2021 |  | 761 × 483 (44 KB) | Merikanto (talk | contribs) | Update | |

| 07:20, 29 June 2021 |  | 827 × 453 (43 KB) | Merikanto (talk | contribs) | update | |

| 06:38, 25 June 2021 |  | 865 × 388 (37 KB) | Merikanto (talk | contribs) | update | |

| 12:05, 15 June 2021 |  | 923 × 417 (39 KB) | Merikanto (talk | contribs) | update | |

| 11:35, 13 May 2021 | 962 × 347 (38 KB) | Merikanto (talk | contribs) | update | ||

| 08:49, 25 April 2021 | 889 × 361 (36 KB) | Merikanto (talk | contribs) | Upload |

You cannot overwrite this file.

File usage on Commons

The following page uses this file:

- File:Koronavirustapaukset suomessa ennuste stheno 1.svg (file redirect)

Metadata

This file contains additional information such as Exif metadata which may have been added by the digital camera, scanner, or software program used to create or digitize it. If the file has been modified from its original state, some details such as the timestamp may not fully reflect those of the original file. The timestamp is only as accurate as the clock in the camera, and it may be completely wrong.

| Width | 896.4pt |

|---|---|

| Height | 448.56pt |